This is our second blog post, published just days after the first. That's intentional.

In Blog #1, we introduced ourselves through GZS Inovacije — a platform we built for the Chamber of Commerce and Industry of Slovenia that manages their national innovation awards process. Dozens of judges, hundreds of projects, 13 regions — all on one system.

This post is about something completely different: a live quiz platform for national television during the Winter Olympics.

Two very different projects, both delivered on time, both running in production without issues. One is an admin dashboard for structured evaluation workflows. The other is a live event platform built for thousands of concurrent users — where every broadcast is a one-shot event with no room for failure.

What these projects have in common is the approach. The same stack. The same decision-making process. JT Digital is less than two months old, and we already have two production systems that prove our workflow holds up — from low-traffic admin panels to live television.

When your app runs live on national television, there's no second chance.

So let's talk about how we built it.

The Brief

Olimpijski Kviz 2026 is an interactive live quiz that runs during Milano-Cortina Winter Olympics coverage on RTV Slovenija — Slovenia's national broadcaster.

The concept: during the broadcast, a QR code appears on screen. Viewers scan it with their phone, open the quiz site, and play along in real-time. 16 questions per quiz, Kahoot-style time-based scoring — the faster you answer correctly, the more points you get.

The competition ran for 16 episodes (February 7–22, 2026). The best 10 of 16 count toward final rankings — you need to complete at least 10 quizzes to be eligible for the final standings.

Content partner: Sport Media Focus. From first conversation to live broadcast: under one month.

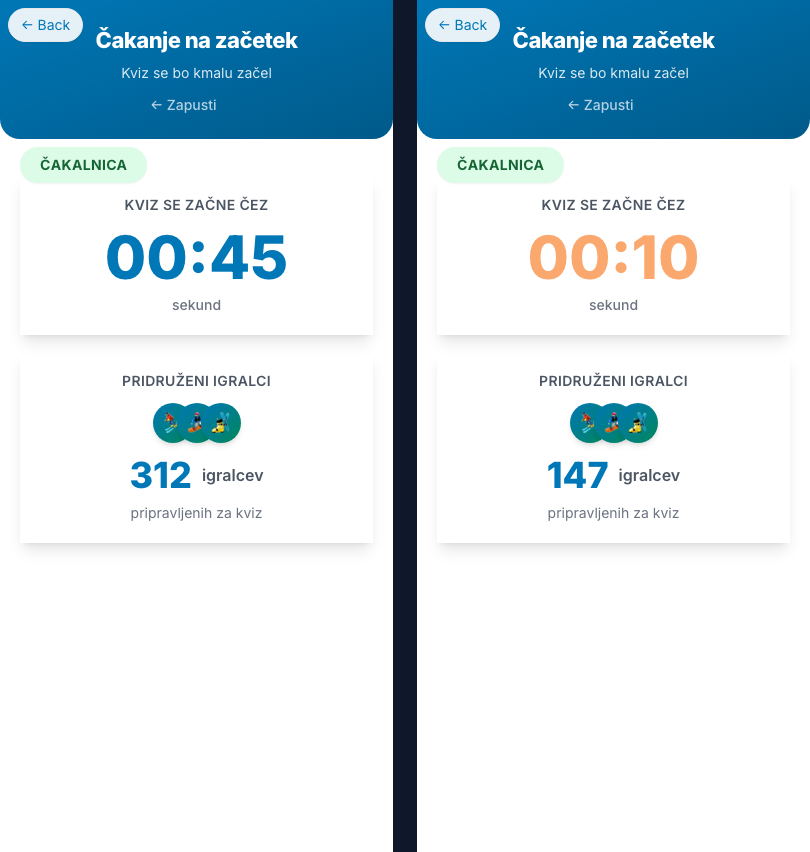

The quiz lobby — participants join via QR code and watch the crowd grow (left), then the countdown begins (right).

The user journey — from QR code on screen to final standings, all synced via 1-second HTMX polling.

The Constraints

Every constraint on this project was also a design driver. They didn't just add up — they multiplied.

Live TV. Zero tolerance for errors. Every episode is a one-shot event. There's no "we'll fix it in tomorrow's release." If the quiz breaks during a broadcast, it breaks in front of the audience.

Up to 10,000 concurrent users. Every architectural choice had to handle thundering herd scenarios. Database connections, cache invalidation, connection pooling — all under simultaneous load from users arriving within seconds of each other.

Sub-second response times. Quiz interactivity has to feel instant. If a user taps an answer and waits more than a couple of seconds, they've lost trust in the platform.

Under one month. From first conversation to first broadcast. No time for exploratory prototypes or architectural rewrites. The stack and the architecture had to be right from day one.

Synchronization. All users must see the same question at the same time. Maximum 1–3 second desync across thousands of phones watching the same broadcast.

Sponsor integration. Video expositions appear during the quiz — up to 10,000 users watching the same 20-second video simultaneously. Without proper infrastructure, that's a serious problem.

High concurrency is one thing. High concurrency on a live TV schedule with no margin for error is something else entirely.

The Stack — Same Tools, Bigger Stage

Same stack as GZS Inovacije and everything else we build: Fastify v5 + TypeScript + HTMX + fluent-html — server-rendered HTML, no client-side framework.

We didn't reach for a "real-time framework" or WebSockets. The quiz runs on server-rendered HTML polled every second via HTMX. That's it.

What changed for this project's scale was the infrastructure around the stack:

- PostgreSQL 16 + Prisma + PgBouncer (380-connection pool)

- Redis 7 — caching, distributed locks, atomic counters

- BullMQ — background job queues for leaderboard computation and emails

- PM2 — cluster mode process management across multiple servers

- Prometheus + Grafana + Loki — full observability with 40+ custom metrics

- Cloudflare R2 + CDN — sponsor video delivery to thousands of concurrent viewers

The stack didn't change. The infrastructure scaled around it.

Production architecture — 2 servers, 16 PM2 processes, PostgreSQL + PgBouncer, Redis, Cloudflare CDN, and full Prometheus/Grafana observability.

Going Deep

Infrastructure — Digital Ocean + Cloudflare

Production setup: 2 Digital Ocean Premium AMD servers — 8 vCPU / 16GB RAM each. Each server runs 7 HTTP processes plus 1 dedicated worker in PM2, totaling 16 processes across the cluster. A third server serves as backup and load testing target.

The database: PostgreSQL Premium with 4 vCPU / 16GB RAM, PgBouncer managing a pool of 380 connections. 16 Prisma clients across the cluster, each with up to 23 connections — PgBouncer multiplexes them to the actual database. Redis Premium with 4GB dedicated memory handles caching, distributed locks, and atomic counters.

Designed for 10,000 concurrent users — load testing later confirmed it could handle 15,000–20,000.

The video problem. Sponsor expositions include 20-second video clips shown mid-quiz. When the state machine transitions to SPONSOR_EXPOSITION, up to 10,000 users simultaneously request the same video file. Without a CDN, that's thousands of concurrent video streams hitting your origin at the same moment.

Solution: Cloudflare R2 (S3-compatible storage) with Cloudflare's CDN. The first request hits origin, every subsequent request is served from the nearest edge node. Origin serves 1 request; Cloudflare handles the other 9,999. This isn't optional infrastructure — it's the only way to serve synchronized video to thousands of viewers.

Deployment runs through GitLab CI/CD. Push to main triggers sequential deployment to each server: pull, install, generate Prisma client, compile TypeScript, run migrations, zero-downtime PM2 reload. Post-deploy health checks verify PM2 process counts, HTTP endpoints, memory, and disk space.

The State Machine — 6 Phases, Zero Room for Error

The quiz lifecycle runs through 6 phases:

The quiz state machine — 6 phases with auto-advancement, distributed locking, and sponsor exposition as an alternate path.

Auto-advancement — no human button-pushing during live TV. A dedicated worker process runs a 1-second heartbeat via setInterval. Every cycle, it checks all active quizzes: has the current phase's timeout elapsed? If so, trigger the transition.

Why setInterval over a proper job queue like BullMQ? We tried BullMQ first. It had 10–15 second delays in edge cases — unacceptable for live TV. setInterval is self-healing: if one cycle is missed, the next catches it. No job lifecycle management, no cancellation logic. We reserved 2 of our 16 PM2 processes specifically for this worker. Sometimes the boring solution is the correct one.

Distributed locking prevents race conditions across 16 processes. Every state transition acquires a Redis-based exclusive lock before reading or writing quiz state. Without this, two servers could both read "phase = QUESTION, timeout elapsed" and both try to transition simultaneously, corrupting state.

// Acquire: atomic SET with NX (only-if-not-exists) + 5s hard expiry

await redis.set(lockKey, lockId, 'EX', 5, 'NX');

// Release: Lua script verifies ownership atomically

// Only the process that acquired the lock can release it

const luaScript = `

if redis.call("get", KEYS[1]) == ARGV[1] then

return redis.call("del", KEYS[1])

else

return 0

end

`;

await redis.eval(luaScript, 1, lockKey, lockId);Redis distributed lock — atomic acquire and ownership-verified release

The transition function follows a strict sequence: acquire lock → database transaction (validate state, validate transition legality, create audit record, update quiz) → post-transaction hooks (immediate cache population, counter resets, slow transaction alerts) → release lock in a finally block — always released, even on error.

Rank calculation runs as a single atomic SQL query when the quiz completes:

UPDATE "QuizAttempt"

SET "rank" = ranked.calculated_rank,

"isCompleted" = true,

"completedAt" = NOW()

FROM (

SELECT id, RANK() OVER (

ORDER BY "totalScore" DESC, "totalTime" ASC

) as calculated_rank

FROM "QuizAttempt"

WHERE "quizId" = $1

) as ranked

WHERE "QuizAttempt".id = ranked.id

AND "QuizAttempt"."quizId" = $1Atomic rank calculation using window functions

All participants ranked in one query — no loops, no N+1, no race conditions.

Caching Strategy — Proactive, Not Reactive

The core insight: don't wait for cache misses.

10,000 users polling every second means 10,000 requests per second. If even 1% miss cache and hit PostgreSQL, that's 100 identical database queries per second. At scale, that kills your database.

Our solution: a background poller pushes hot data into Redis before anyone requests it. Every second, the worker fetches active quiz state from the database and writes it to Redis. Smart skip logic: if the cache exists and there are more than 2 seconds remaining in the current phase, skip the refresh — no unnecessary database reads. On phase transition, the state machine immediately populates the cache. Zero gap between the transition and cache availability.

The biggest performance win: replacing Prisma's include (which generates multiple queries) with manual SQL JOINs for the hot path. Single query with LEFT JOINs, manually grouped into hierarchical structure. Result: 200+ database queries per second eliminated — a 95%+ reduction in database load during polling.

We also replaced expensive SELECT COUNT(*) queries with Redis atomic counters — O(1) reads instead of full table scans under load.

HTMX Polling + Morphdom — The UX Secret

One endpoint, six views. GET /quiz/:quizId/play returns the correct HTML based on the current quiz phase. HTMX polls this every second with trigger: "every 1000ms". Swap mode: morph:outerHTML — DOM diffing via morphdom, not full replacement.

Why morphdom matters: a standard outerHTML swap would kill playing videos, reset scroll position, blur focused inputs, and cause visible flickering between phase transitions. Morphdom diffs the new HTML against the current DOM and applies only the minimal changes needed. Sponsor videos keep playing across poll updates. Phase transitions are smooth. No flicker.

The "no WebSockets" decision. One-second polling means a maximum one-second desync — perfectly acceptable for a quiz. Server-rendered HTML with polling is dramatically simpler than WebSocket state management across 16 processes. No client-side state to synchronize, no reconnection logic, no message ordering. For this use case, "real-time enough" beats "truly real-time but complex."

Observability — You Can't Fix What You Can't See

40+ custom Prometheus metrics feeding three Grafana dashboards — app performance, infrastructure health, and live quiz operations.

During every live broadcast, these dashboards are open on a second screen. Watching metrics in real-time while the quiz runs on television. This isn't a nice-to-have — it's how you know the system is healthy before users report that it isn't.

Load Testing — Proving It Before Air

k6 load tests run against the actual Digital Ocean production infrastructure — not a laptop, not a staging environment. Two servers, 16 PM2 processes, managed PostgreSQL with PgBouncer, managed Redis. Digital Ocean Load Balancer in front.

We ramped all the way to 20,000 concurrent virtual users:

| Test | VUs | Req/sec | Median | P95 | Failed | Notes |

|---|---|---|---|---|---|---|

| Load balancer initial | 15,000 | 2,055 | 283ms | 1,408ms | 0.50% | Best LB result, keepalive off |

| 20k stress | 20,000 | 5,122 | 1,556ms | 3,204ms | 0.58% | Found TLS + TTFB bottleneck |

| Post-optimization | 2,000 | 3,478 | 16ms | 118ms | 0% | After N+1 fixes + private VPC |

The 20,000 VU test revealed real production bottlenecks you'd never find locally. TLS handshake overhead: 325ms average between load balancer and app servers. Time to first byte at 20k users: 1,537ms average. Network round-trips compound — each request makes 2–5 Redis/database calls, ~2–5ms each over the network versus ~0.1ms locally.

What we fixed based on these results:

- Migrated all connections to private VPC networking (saved ~40ms per new database connection)

- Eliminated N+1 queries: 200+ DB queries/sec removed during STANDINGS phase

- Replaced expensive COUNT queries with Redis atomic counters

- Implemented client-side countdown timer (synced to server time) so quiz fairness doesn't depend on poll latency

- Increased polling interval from 500ms to 1,000ms (50% load reduction, no UX impact)

- Fixed cache staleness window that caused thundering herd during phase transitions

Post-optimization results at 2,000 concurrent users on production: 3,478 req/sec sustained, median 16ms, P95 118ms, 0% failure rate — 5 million requests, zero errors.

The real story isn't the final numbers — it's the iteration. We load-tested on real infrastructure, found bottlenecks that only appear at scale over real networks, fixed them, and re-tested. By air date, we had confidence backed by data, not estimates.

How Scoring Works

Kahoot-style time-based scoring — the faster you answer correctly, the more points you earn. Answer instantly and get the full 100 points. Use the entire time limit and still get 50. Wrong answers get zero.

function calculatePointsForAnswer(

isCorrect: boolean,

timeSpent: number,

timeLimit: number,

maxPoints: number = 100

): number {

if (!isCorrect) return 0;

const normalizedTime = Math.max(0, Math.min(timeSpent, timeLimit));

const multiplier = 1 - (normalizedTime / timeLimit) / 2;

return Math.round(multiplier * maxPoints);

}

// Instant (0s) → 1.0 × 100 = 100 points

// Quarter time → 0.875 × 100 = 88 points

// Half time → 0.75 × 100 = 75 points

// At time limit → 0.5 × 100 = 50 pointsTime-based scoring — faster correct answers earn more points

What We Learned

1. The same stack scales. Fastify + HTMX + fluent-html works for a 50-user admin dashboard (GZS Inovacije) and a live TV quiz built for thousands of concurrent users. The infrastructure changes; the code patterns don't.

2. setInterval is underrated. We started with BullMQ for quiz advancement. It had 10–15 second delays in edge cases. A simple 1-second setInterval with a distributed lock has been flawless across all episodes. Sometimes the boring solution is the correct one.

3. Proactive caching changes everything. Don't wait for cache misses at scale. If you know what data users will request, put it in cache before they ask. This one pattern is the difference between 25,000 database queries per second and 2,000.

4. Monitoring during live events is non-negotiable. Grafana dashboards weren't decorative — they were operational tools. You need to see problems before users feel them.

5. CDN isn't optional for synchronized media. 10,000 users hitting play on the same video at the same moment is a specific engineering problem. Cloudflare CDN solved it completely.

6. AI-assisted development let us move faster without moving recklessly. An under-one-month timeline for a system this robust would have been extremely tight for a small team without Claude Code. Not a silver bullet — but a real multiplier on experienced engineering.

The Results

16 episodes, zero downtime, zero critical errors.

3,727 users registered over the course of the competition, with 2,365 unique participants playing across the 16 quizzes. 4,996 quiz attempts total — and a 100% completion rate. Not a single user dropped out mid-quiz.

65,409 answers submitted with an average accuracy of 41.6% — the questions were genuinely challenging. Peak participation hit 544 players in the final episode; the quietest evening drew 154.

The top player, GibčniŠportnik_4004, scored 16,047 points across all 16 quizzes — averaging over 1,000 per episode. Two sponsors ran integrated video expositions during the quizzes: Športna loterija across 7 episodes and Ford across 5.

The platform was designed for 10,000 concurrent users and load-tested to handle up to 20,000. The actual audience was smaller — but that's exactly the point. We built for the worst case because live television doesn't give you a second chance. The result: a system that never missed a beat.

Let's Talk

Building something that needs to work under pressure? Whether it's a live event platform, a high-traffic application, or a system where downtime isn't an option — we've been there. Start a conversation.